To find duplicate rows based on all columns in Pandas DataFrame, use the .duplicated() method.

You can accomplish this in various approaches depending on the specific requirement, such as finding duplicates based on all columns, a single column, multiple columns, or even considering the sorting order.

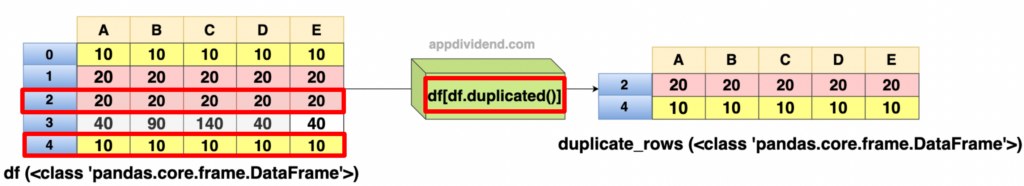

Approach 1: Select duplicate rows based on all columns

The .duplicated() does not modify the original DataFrame; it returns a Boolean Series, which can be used for indexing. By default, it marks duplicates as True except for the first occurrence.

import pandas as pd

df = pd.DataFrame({'A': [10, 20, 20, 40, 10],

'B': [10, 20, 20, 90, 10],

'C': [10, 20, 20, 140, 10],

'D': [10, 20, 20, 40, 10],

'E': [10, 20, 20, 40, 10]})

print(df)

print("After selecting all the duplicate rows")

duplicate_rows = df[df.duplicated()]

print(duplicate_rows)

Output

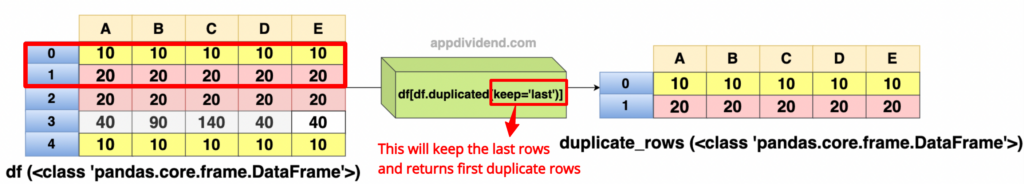

Approach 2: Getting the list of duplicate last rows based on all columns

If you want to get the last occurrences of the duplicates, use keep=’last’.

- keep=’first’ (default): Marks duplicates as True except for the first occurrence.

- keep=’last’: Marks duplicates as True except for the last occurrence.

- keep=False: Marks all duplicates as True.

import pandas as pd

df = pd.DataFrame({'A': [10, 20, 20, 40, 10],

'B': [10, 20, 20, 90, 10],

'C': [10, 20, 20, 140, 10],

'D': [10, 20, 20, 40, 10],

'E': [10, 20, 20, 40, 10]})

print(df)

print("Getting duplicate last rows based on all columns")

duplicate_rows = df[df.duplicated(keep='last')]

print(duplicate_rows)

Output

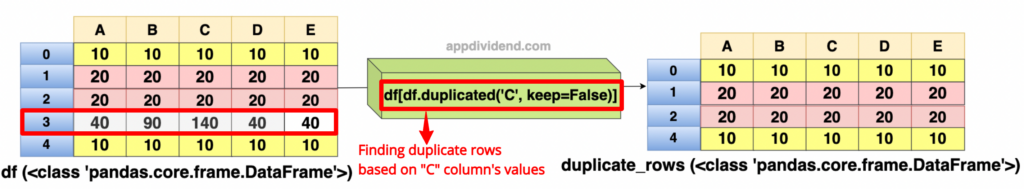

Approach 3: Selecting duplicate rows based on a single column

import pandas as pd

df = pd.DataFrame({'A': [10, 20, 20, 40, 10],

'B': [10, 20, 20, 90, 10],

'C': [10, 20, 20, 140, 10],

'D': [10, 20, 20, 40, 10],

'E': [10, 20, 20, 40, 10]})

print(df)

print("Finding duplicates based on a 'C' column")

rows = df[df.duplicated('C', keep=False)]

print(rows)

Output

In this code, we found duplicate rows based on the values in column ‘C’.

The duplicated() method returns a boolean series that is True for each row that is a duplicate of an earlier row in column ‘C’.

With keep=False, all duplicates are marked as True, not just the first occurrence.

In our DataFrame, rows 1 and 2 have the same value (20) in column ‘C’, and rows 0 and 4 have the same value (10) in column ‘C’. Therefore, these rows will be marked as duplicates.

So, in the output, we got rows 0, 1, 2, and 4, which are duplicates except row 3.

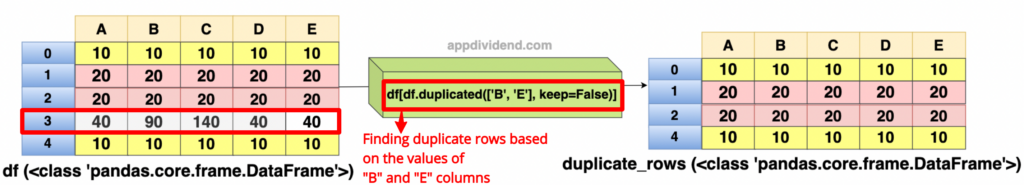

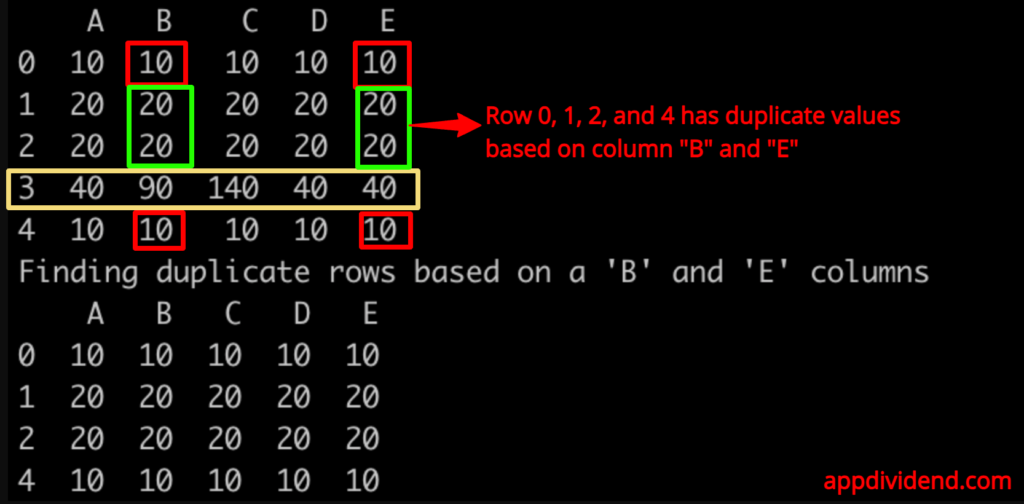

Approach 4: Selecting duplicate rows based on multiple columns

import pandas as pd

df = pd.DataFrame({'A': [10, 20, 20, 40, 10],

'B': [10, 20, 20, 90, 10],

'C': [10, 20, 20, 140, 10],

'D': [10, 20, 20, 40, 10],

'E': [10, 20, 20, 40, 10]})

print(df)

print("Finding duplicate rows based on a 'B' and 'E' columns")

rows = df[df.duplicated(['B', 'E'], keep=False)]

print(rows)

Output

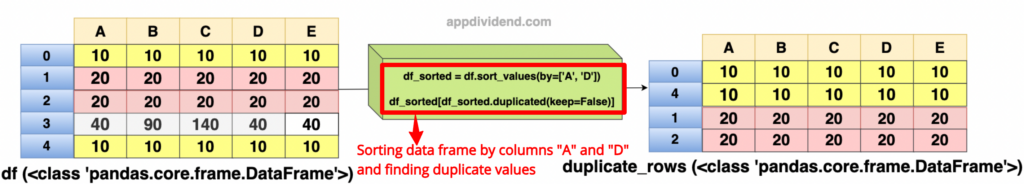

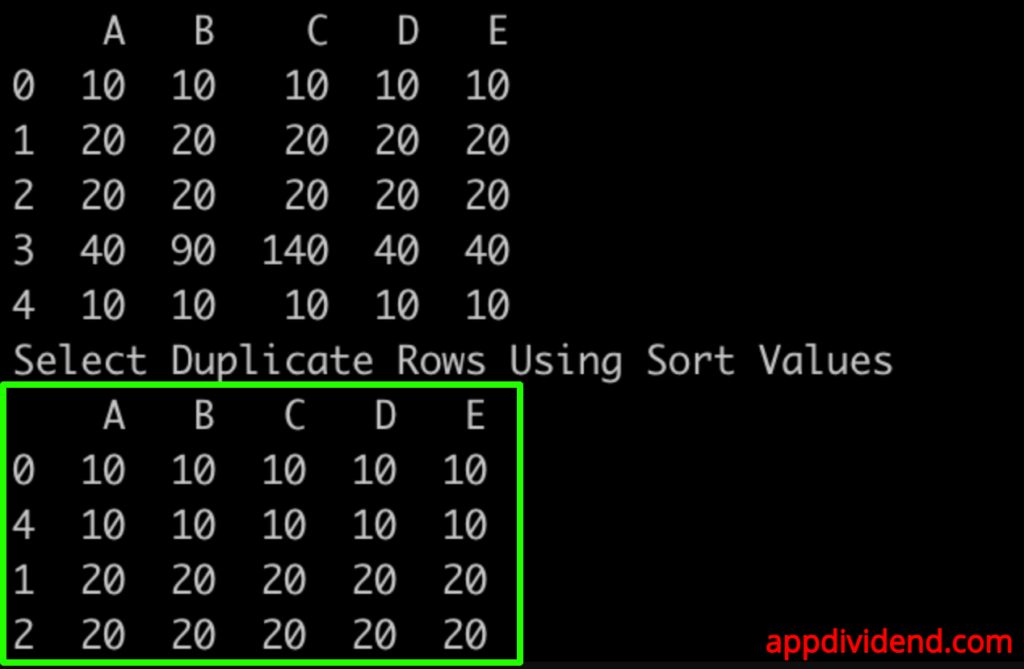

Approach 5: Selecting duplicate rows using sort values

To find duplicates considering the sorting order, first sort the DataFrame and then apply duplicated().

import pandas as pd

df = pd.DataFrame({'A': [10, 20, 20, 40, 10],

'B': [10, 20, 20, 90, 10],

'C': [10, 20, 20, 140, 10],

'D': [10, 20, 20, 40, 10],

'E': [10, 20, 20, 40, 10]})

print(df)

print("Select Duplicate Rows Using Sort Values")

# Sort by columns (replace 'A', 'D' with your column names)

df_sorted = df.sort_values(by=['A', 'D'])

# Then find duplicates

rows = df_sorted[df_sorted.duplicated(keep=False)]

print(rows)

Output

That’s all!